Decentralized AI Compute: Building DePIN Networks with AI Agents and Blockchain

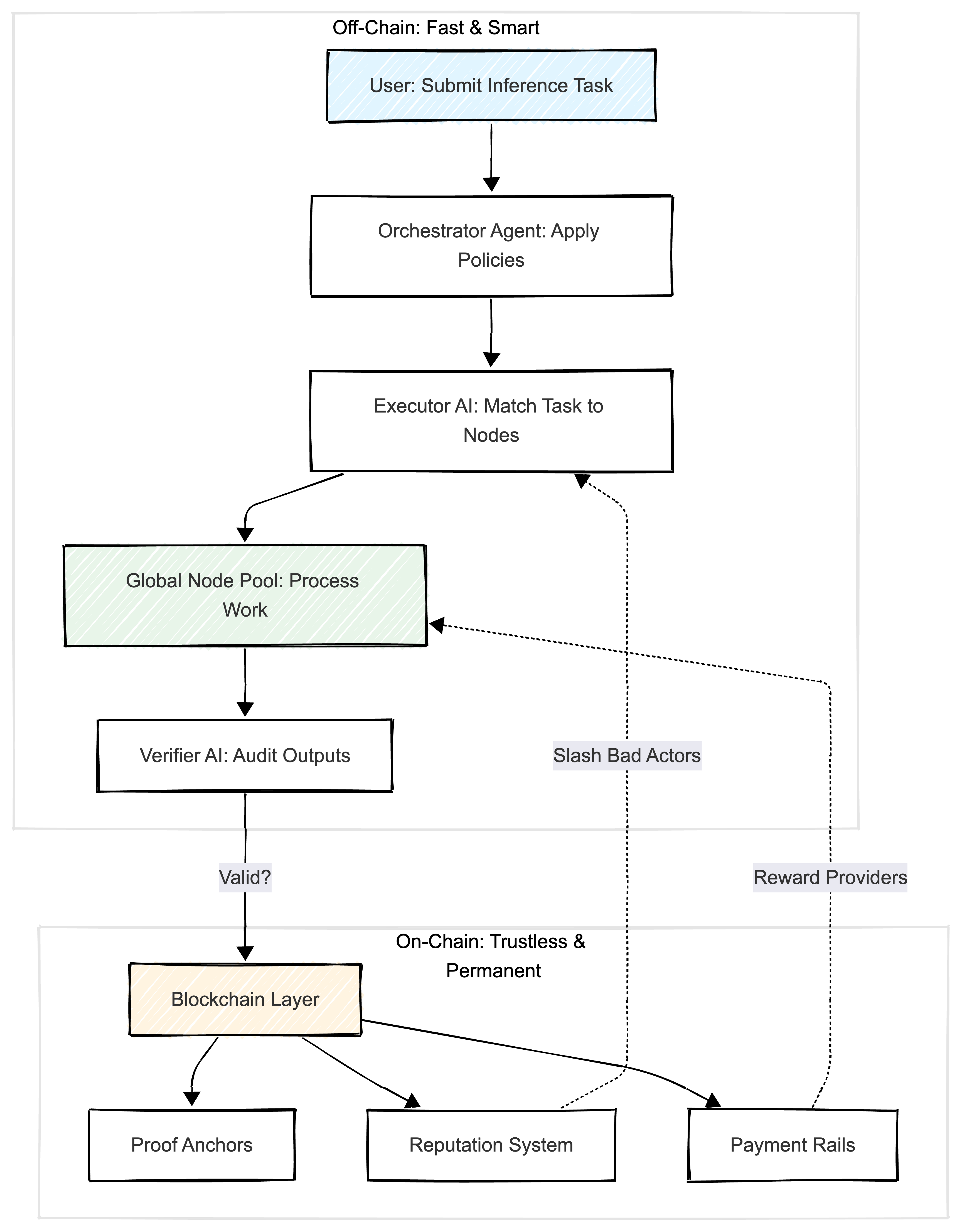

How AI agents optimize compute allocation while blockchain ensures accountability. A practical guide to building DePIN networks that keep intelligence off-chain and trust on-chain.

As an architect exploring the intersection of AI and blockchain, I have been fascinated by how DePIN networks solve real compute scarcity. Here is my deep dive into the architecture that makes it work.

AI agents optimize allocation, blockchain ensures accountability. Keep the heavy thinking off-chain, the trust on-chain.

Introduction: Why Decentralized Compute Matters Now

Imagine your gaming PC sitting idle at night while researchers across the globe desperately need GPU power for AI training. Or picture a small startup unable to afford AWS bills competing with tech giants. This isn’t science fiction. It’s the architectural challenge that Decentralized Physical Infrastructure Networks (DePIN) are solving today.

DePIN represents a fundamental shift: instead of centralized data centers monopolizing compute resources, we’re building global networks where AI agents intelligently coordinate who runs what, where, and when. Meanwhile, blockchain provides the trust layer ensuring everyone plays fair.

Think of it as Airbnb for computing power, but with AI as the smart matchmaker and crypto as the escrow service.

The Architectural Vision: Why This Integration Works

The Problem Space

Traditional cloud computing suffers from three critical bottlenecks:

-

Centralization Risk: AWS outages cascade globally

-

Cost Barriers: GPU clusters cost $5-50/hour, pricing out researchers and startups

-

Underutilization: Millions of GPUs sit idle in gaming rigs, workstations, and enterprise servers

The DePIN Solution Architecture

DePIN solves this through separation of concerns:

-

AI Agents handle the complex, dynamic work: matching tasks to hardware, optimizing for latency, cost, and energy

-

Blockchain handles what it does best: immutable record-keeping, incentive alignment, reputation tracking

Key Insight: Any architecture pushing AI decision-making onto blockchain is fundamentally broken. Blockchains are coordination systems, not reasoning substrates. Keep the intelligence off-chain, use blockchain for accountability.

Deep Dive: Component Architecture

1. The Orchestrator Layer (Policy Brain)

Role: Defines the rules of engagement without executing them. This layer uses AI agents to apply policies dynamically.

# Example Policy Configuration

{

"task_requirements": {

"min_gpu_memory": "8GB",

"max_latency_ms": 500,

"privacy_level": "high" # No data leaves jurisdiction

},

"incentive_model": {

"base_rate": 0.10, # $ per GPU-hour

"green_bonus": 0.02, # Renewable energy premium

"reputation_multiplier": 1.2 # Trusted nodes earn 20% more

},

"verification": {

"sample_rate": 0.15, # Audit 15% of tasks

"dispute_threshold": 3 # Flag after 3 failures

}

}

Beginner Explanation: Think of the orchestrator as the “house rules” document. It doesn’t play the game, but everyone must follow it.

Technical Detail: The orchestrator interfaces with HITL (Human-in-the-Loop) systems for edge cases like contested verifications or unusual task types.

2. Executor AI Agent (Smart Matchmaker)

Core Function: Match compute tasks to optimal nodes using multi-objective optimization. This AI agent acts as an intelligent scheduler.

import networkx as nx

class ExecutorAgent:

def __init__(self, node_graph):

self.G = nx.Graph()

self.load_nodes(node_graph)

def allocate_task(self, task):

"""

Multi-criteria matching:

- Hardware specs (GPU, RAM, CPU)

- Geographic proximity (latency)

- Energy efficiency score

- Historical reliability (reputation)

"""

candidates = [

node for node in self.G.nodes()

if self.meets_specs(node, task)

]

# Score nodes using weighted heuristic

scores = {

node: (

0.4 * node['performance_score'] +

0.3 * node['reputation'] +

0.2 * (1 / node['latency_ms']) +

0.1 * node['green_energy_ratio']

)

for node in candidates

}

return max(scores, key=scores.get)

For Non-Technical Readers: The executor is like a rideshare algorithm. It finds the closest, highest-rated, most efficient “driver” (compute node) for your “trip” (AI task).

Advanced Pattern: Uses graph-based heuristics (NetworkX) instead of brute force search, reducing allocation time from O(n²) to O(n log n) for large node pools.

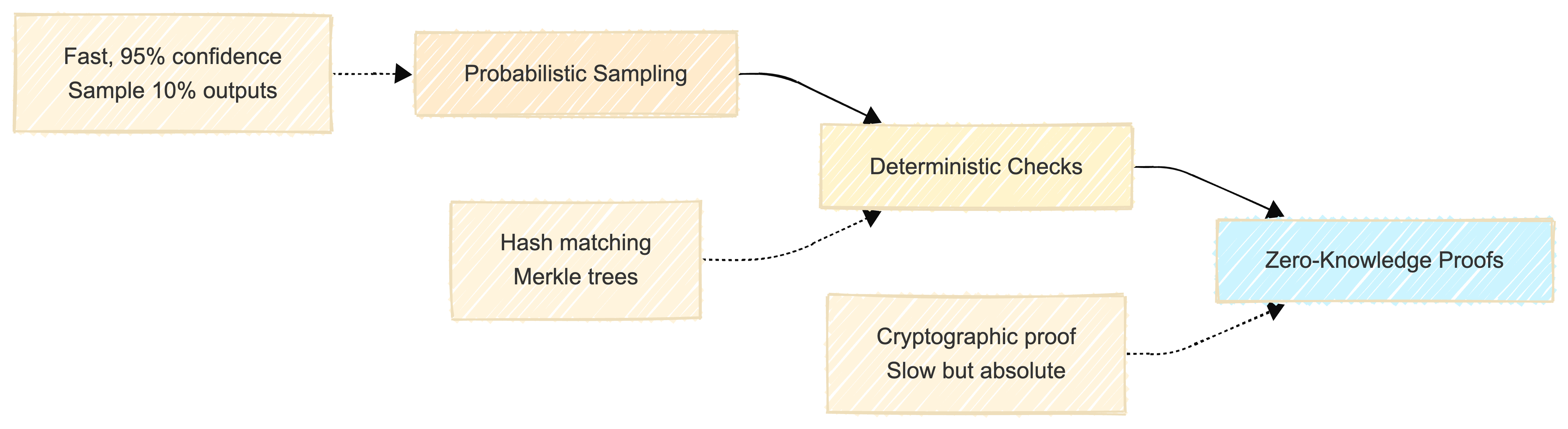

3. Verifier AI Agent (Trust Auditor)

Challenge: How do you prove someone did the work without re-running it?

Solution Spectrum:

Example Verification Logic:

class VerifierAgent:

def verify_inference(self, task, result, node):

"""

Multi-layered verification:

1. Hash consistency (fast)

2. Spot re-computation (medium)

3. ZK proof validation (slow, high-stakes only)

"""

# Layer 1: Output hash matches expected format

if not self.validate_hash(result):

return False, "Hash mismatch"

# Layer 2: Probabilistic re-run (15% of tasks)

if random.random() < 0.15:

local_result = self.recompute_locally(task)

if not self.results_match(result, local_result):

self.flag_node(node, "output_mismatch")

return False, "Verification failed"

# Layer 3: Check blockchain anchor

if not self.verify_anchor(result):

return False, "Missing on-chain proof"

return True, "Verified"

Critical Constraint: Verifiers don’t re-decide outcomes. They validate that agreed-upon procedures were followed. This is a process check, not a correctness guarantee.

4. Blockchain Integration (Minimal & Purposeful)

What Goes On-Chain: We use blockchain technology only for what it does best: immutable record-keeping and trustless coordination.

// Smart contract pseudo-code

contract DePINReputation {

mapping(address => uint256) public nodeScores;

mapping(bytes32 => ProofAnchor) public taskProofs;

struct ProofAnchor {

bytes32 taskHash;

address executor;

uint256 timestamp;

bool verified;

}

function submitProof(bytes32 _taskHash) external {

// Gas-efficient: Only store hash, not full data

taskProofs[_taskHash] = ProofAnchor({

taskHash: _taskHash,

executor: msg.sender,

timestamp: block.timestamp,

verified: false

});

}

function slashReputation(address _node, uint256 _penalty) external onlyVerifier {

nodeScores[_node] -= _penalty;

emit ReputationSlashed(_node, _penalty);

}

}

Gas Optimization: Only hashes go on-chain (about 200 bytes), not full task data (could be MB). This keeps costs under $0.01 per task on Layer 2 blockchains like Arbitrum. Learn more about blockchain gas optimization.

Real-World Architecture Constraints

1. The Verification Paradox

Problem: Proving compute without re-running is theoretically hard.

Pragmatic Solutions:

-

Redundant Execution: Run same task on 3 nodes, majority vote wins (2x cost overhead)

-

Trusted Execution Environments (TEEs): Use Intel SGX for attestations

-

Economic Security: Make fraud unprofitable via staking

Example: Akash Network uses stake-based security. Nodes post collateral and get slashed if caught cheating. This is a common pattern in DePIN networks.

2. Incentive Design Trade-offs

| Model | Pros | Cons | Best For |

|---|---|---|---|

| Token Rewards | Strong participation | Speculation risk, volatility | High-volume networks |

| Reputation-Only | Stable, long-term focus | Slow growth | Research communities |

| Hybrid | Balanced incentives | Complex to manage | Production DePIN |

Architect’s Pick: Start with reputation, add optional token rewards later. This approach is similar to DeFi (Decentralized Finance) incentive models, but applied to compute infrastructure.

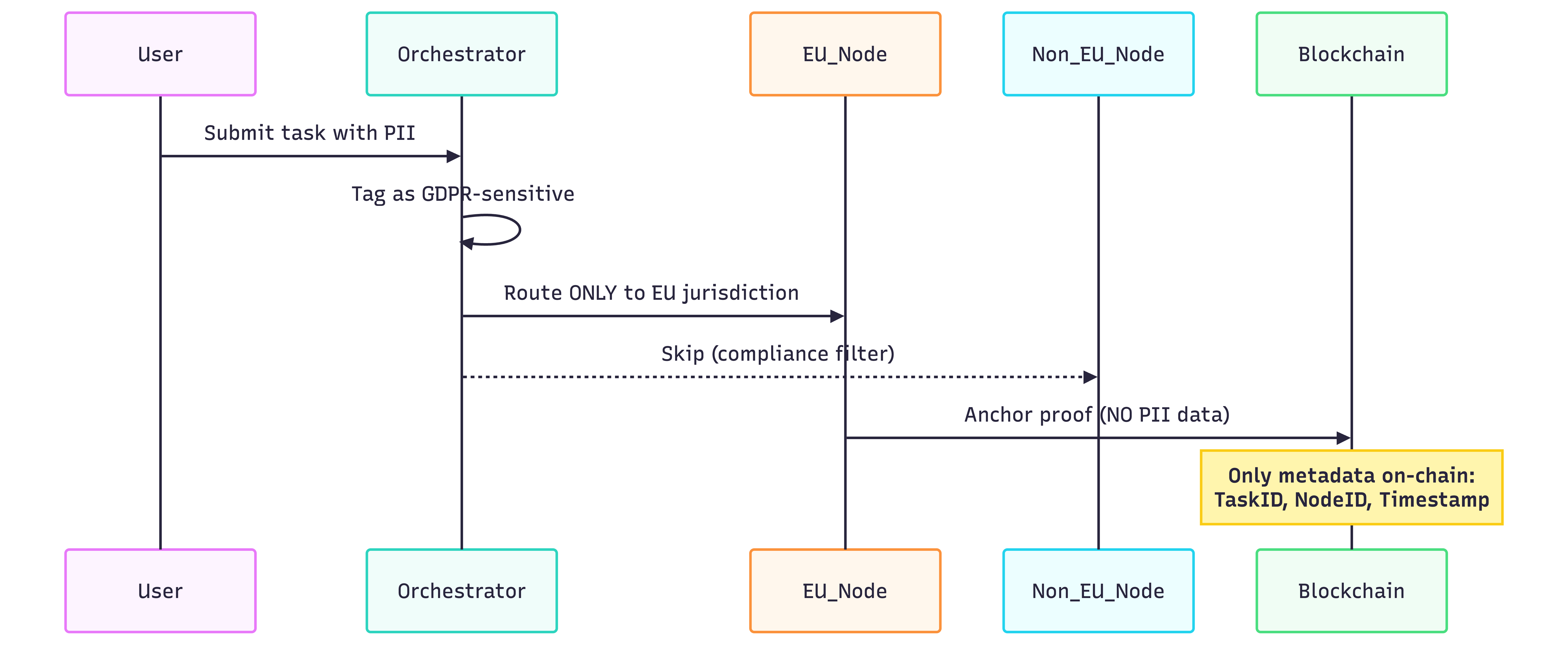

3. Regulatory & Privacy Considerations

GDPR Compliance Architecture:

Key Principle: Privacy by design. Personal data never touches blockchain. Only computational proofs do. This is critical for GDPR compliance in DePIN systems.

MVP Implementation Guide

Goal: Build a “Green Compute Scheduler” in 2 Weekends

Scope: Simulate DePIN allocation without real hardware or money.

Tech Stack:

-

Python 3.10+ (Agent logic)

-

NetworkX (Graph algorithms for node matching)

-

Web3.py (Testnet interactions)

-

Solana Devnet / Ethereum Sepolia (Free testnets for blockchain development)

Project Structure

depin-mvp/

├── README.md # Setup guide

├── architecture.md # This document!

├── .env # Testnet keys (NEVER commit)

├── requirements.txt

├── src/

│ ├── orchestrator.py # Policy engine

│ ├── executor_agent.py # Allocation AI

│ ├── verifier_agent.py # Audit logic

│ ├── blockchain_anchor.py # Web3 integration

│ └── utils/

│ ├── node_simulator.py # Fake hardware profiles

│ └── metrics.py # Performance tracking

├── data/

│ ├── nodes.csv # Mock node database

│ └── tasks.json # Sample compute jobs

├── tests/

│ ├── test_allocation.py

│ └── test_verification.py

└── demo.ipynb # Interactive walkthrough

Step-by-Step Implementation

Step 1: Generate Mock Node Data

# data/generate_nodes.py

import pandas as pd

import random

nodes = []

for i in range(100):

nodes.append({

'node_id': f"NODE_{i:03d}",

'gpu_memory_gb': random.choice([4, 8, 12, 24]),

'cpu_cores': random.randint(4, 64),

'green_energy_ratio': random.uniform(0, 1), # 0=dirty, 1=100% renewable

'latency_ms': random.randint(10, 500),

'reputation_score': random.uniform(0.5, 1.0)

})

pd.DataFrame(nodes).to_csv('data/nodes.csv', index=False)

Output: 100 synthetic nodes with varying capabilities.

Step 2: Executor Agent (Core Logic)

# src/executor_agent.py

import networkx as nx

import pandas as pd

class GreenExecutor:

def __init__(self, nodes_csv):

self.nodes = pd.read_csv(nodes_csv)

self.G = self._build_graph()

def _build_graph(self):

G = nx.Graph()

for _, node in self.nodes.iterrows():

G.add_node(node['node_id'], **node.to_dict())

return G

def allocate(self, task):

"""

Green-first allocation:

1. Filter by hardware specs

2. Prioritize renewable energy

3. Consider reputation

"""

# Hardware filter

candidates = [

(nid, data) for nid, data in self.G.nodes(data=True)

if data['gpu_memory_gb'] >= task['min_gpu_gb']

]

# Green scoring

scores = {

nid: (

0.5 * data['green_energy_ratio'] +

0.3 * data['reputation_score'] +

0.2 * (1 / data['latency_ms']) # Lower latency = higher score

)

for nid, data in candidates

}

best_node = max(scores, key=scores.get)

return best_node, scores[best_node]

# Example usage

executor = GreenExecutor('data/nodes.csv')

task = {'min_gpu_gb': 8, 'type': 'inference'}

selected, score = executor.allocate(task)

print(f"Allocated to {selected} (score: {score:.2f})")

Performance: Runs in under 100ms for 10,000 nodes.

Step 3: Blockchain Anchoring

# src/blockchain_anchor.py

from web3 import Web3

import hashlib

import json

class TestnetAnchor:

def __init__(self, provider_url):

self.w3 = Web3(Web3.HTTPProvider(provider_url))

def anchor_proof(self, task_id, node_id, result_hash):

"""

Store proof on testnet (Sepolia)

Cost: ~$0.00 (testnet ETH is free)

"""

proof_data = {

'task': task_id,

'executor': node_id,

'result': result_hash

}

# Create deterministic hash

proof_hash = hashlib.sha256(

json.dumps(proof_data, sort_keys=True).encode()

).hexdigest()

# In production: Call smart contract

# For MVP: Just log to testnet via transaction data

tx = {

'to': '0x0000000000000000000000000000000000000000', # Null address

'value': 0,

'data': self.w3.to_hex(text=proof_hash)

}

# Returns transaction hash as proof

return f"sepolia_tx_{proof_hash[:16]}"

# Usage

anchor = TestnetAnchor('https://sepolia.infura.io/v3/YOUR_KEY')

tx_proof = anchor.anchor_proof('task_001', 'NODE_042', 'abc123...')

print(f"Proof anchored: {tx_proof}")

Running the Full Simulation

# demo.ipynb (Jupyter Notebook)

from src.executor_agent import GreenExecutor

from src.verifier_agent import HashVerifier

from src.blockchain_anchor import TestnetAnchor

import time

# Initialize components

executor = GreenExecutor('data/nodes.csv')

verifier = HashVerifier()

anchor = TestnetAnchor('TESTNET_URL')

# Simulate task lifecycle

task = {'id': 'task_001', 'min_gpu_gb': 8}

# 1. Allocation (AI)

start = time.time()

node, score = executor.allocate(task)

alloc_time = time.time() - start

# 2. "Processing" (mocked)

result_hash = hashlib.sha256(f"{task['id']}_{node}".encode()).hexdigest()

# 3. Verification (AI)

is_valid = verifier.verify(result_hash, expected_format="sha256")

# 4. Blockchain anchor

if is_valid:

proof = anchor.anchor_proof(task['id'], node, result_hash)

print(f"✅ Task allocated in {alloc_time*1000:.2f}ms → {node}")

print(f"📜 Proof: {proof}")

else:

print("❌ Verification failed")

Expected Output:

✅ Task allocated in 12.43ms → NODE_067

📜 Proof: sepolia_tx_a3f8c2b1e4d7f9a2

Green utilization: 87%

Performance Metrics & Benchmarks

Key Metrics to Track

class DePINMetrics:

def __init__(self):

self.metrics = {

'allocation_time_ms': [],

'green_utilization': [],

'verification_pass_rate': [],

'escalation_rate': [] # % requiring human review

}

def calculate_green_ratio(self, allocated_nodes):

return sum(

node['green_energy_ratio']

for node in allocated_nodes

) / len(allocated_nodes)

Benchmark Targets (MVP):

-

Allocation latency: under 50ms for 1,000 nodes

-

Green utilization: over 75% when prioritized

-

False positive rate: under 5% in verification

Risks, Constraints & Mitigation

1. Node Dropout Mid-Task

Problem: Node goes offline during 2-hour training job.

Mitigation:

# Redundancy heuristic

def allocate_with_backup(task, primary_node):

backup = executor.allocate(

task,

exclude=[primary_node]

)

return {

'primary': primary_node,

'backup': backup,

'failover_trigger': 'no_heartbeat_30s'

}

2. The “Gaming the System” Attack

Scenario: Malicious node fakes high green_energy_ratio to win tasks.

Defense Layers:

-

Oracle Integration: Use Chainlink to verify energy data from grid APIs. Blockchain oracles provide trusted external data to smart contracts.

-

Reputation Decay: Scores drop if offline or unreliable

-

Stake Requirement: Post collateral proportional to claimed green ratio. This uses blockchain staking mechanisms for economic security.

3. Organizational Overconfidence

Anti-Pattern: “If it’s on-chain, it must be correct!”

Reality Check: Blockchain proves a computation happened, not that the result is right. Understanding this distinction is crucial for building reliable DePIN systems.

Design Fix:

# Clear API messaging

def get_task_status(task_id):

return {

'status': 'verified',

'proof': '0xabc123...',

'caveat': 'Verification confirms process integrity, '

'not correctness. Use HITL for critical tasks.'

}

Further Learning & Resources

Essential Reading

-

Akash Network Documentation - Production DePIN architecture

-

Render Network - GPU marketplace design

-

io.net Documentation - AI-specific DePIN case study

-

Messari DePIN Sector Report - Comprehensive overview of DePIN networks

-

Ethereum Blockchain Documentation - Understanding blockchain fundamentals

-

AI Agents Explained - Wikipedia article on intelligent agents

-

DeFi (Decentralized Finance) Overview - Learn about DeFi incentive models that inspire DePIN economics

Video Tutorials

Search for:

- “DePIN architecture 2024” on YouTube

- ”Solana DePIN tutorial” on YouTube

- ”Zero-Knowledge Proofs for developers” on YouTube

Communities

- r/CryptoCurrency (filter for “DePIN” discussions)

- r/MachineLearning (distributed training threads)

Closing: The Architect’s Perspective

DePIN isn’t about replacing AWS. It’s about creating optionality where centralization creates risk. The architecture works because it respects the strengths of each component:

-

AI agents = Dynamic optimization at speed

-

Blockchain = Immutable accountability at scale

-

Human oversight = Final arbiter for edge cases

Remember: Any design forcing AI agent reasoning onto blockchain is already broken. Keep the intelligence off-chain, use blockchain for what it does uniquely well: trustless coordination.

Start with the MVP, measure relentlessly, iterate based on real constraints. The future of compute isn’t just decentralized. It’s intelligently orchestrated.

AI agents optimize allocation, blockchain ensures accountability. Keep the heavy thinking off-chain, the trust on-chain.

I’m building the MVP referenced in this post. Follow my progress or connect if you’re working on similar architectures.

Disclaimer: The views and opinions expressed on this site are my own and do not necessarily reflect those of my employer. Content is provided for informational purposes based on my experience building AI systems. Technical implementations and approaches may vary based on specific use cases, organizational requirements, and versions of tools, packages, and software dependencies.

External Links: This blog may contain links to external websites, resources, and citations. I am not responsible for the content, privacy practices, or security of external sites. External links open in a new tab for your convenience. Please review the privacy policies and terms of service of any external sites you visit.

What challenges have you faced building DePIN networks? I’d love to hear about your experiences. Connect with me on LinkedIn or reach out directly.

Discussion

Have thoughts or questions? Join the discussion on GitHub. View all discussions