Sloperators: Why AI Outputs Need Owners, Not Better Models

AI outputs fail when signals lack owners and judgment.

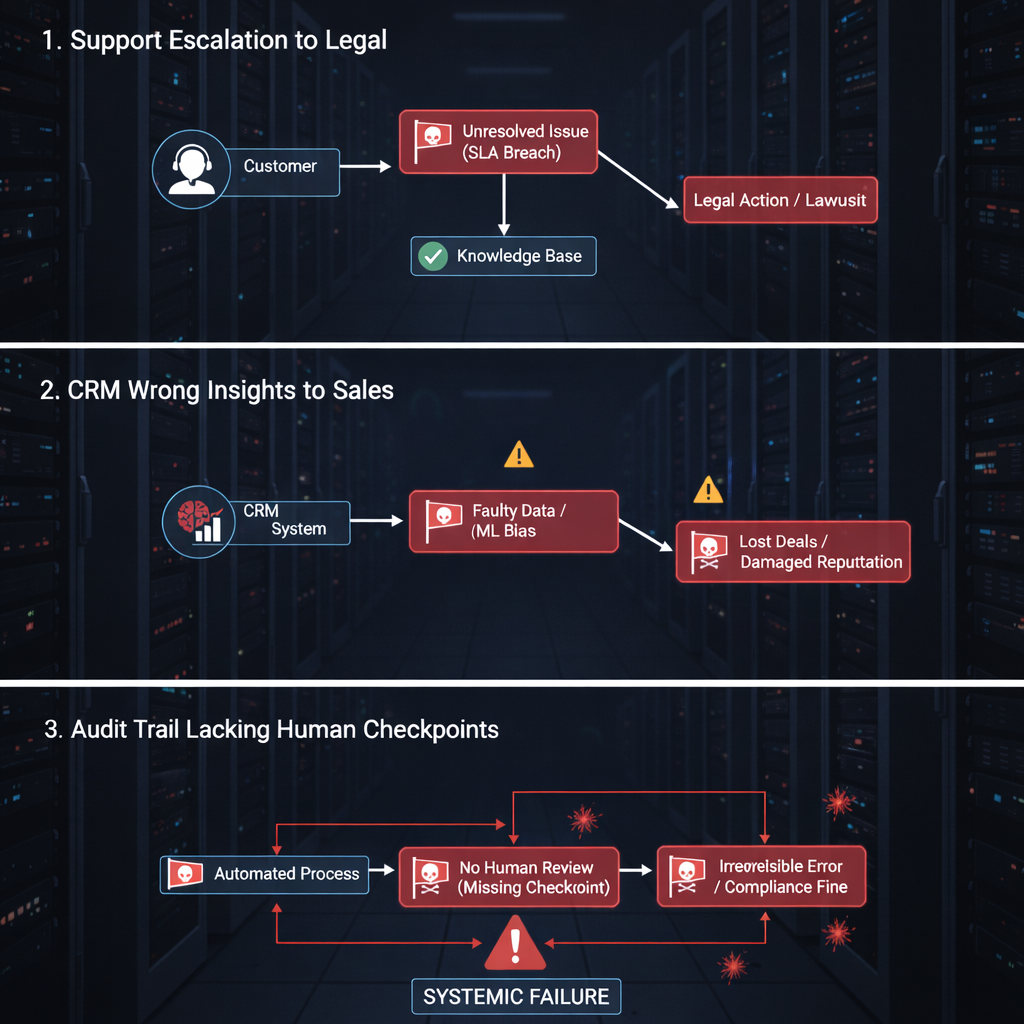

A CRM analyst runs an LLM. Thirty seconds later: a customer risk score. Confident. Polished. Wrong. The system auto-flags the account for collections. Legal gets notified. Three months later someone asks:

“Who verified this?”

No one did.

This wasn’t a model failure. It was a judgment failure [1].

Welcome to sloperator territory - treating AI outputs as decision-ready signals without a human checkpoint [1][2].

What Is a Sloperator?

Definition: A sloperator prompts an AI system, receives fluent output, and ships it unchanged - no source verification, no reasoning validation, no contextual judgment [1][2].

They skip the judgment layer that separates tool usage from autopilot execution [1].

Two engineers. Same AI tool:

- AI-assisted engineer: Uses AI for drafts, boilerplate, and ideation, then tests, rewrites, and owns the final output [1].

- Sloperator: Copies confident output straight into production and moves on [1].

The term gained traction during Linux kernel debates when Linus Torvalds shut down prolonged arguments about “AI slop,” pointing out that rules don’t stop bad behavior - accountability does [3][4][5].

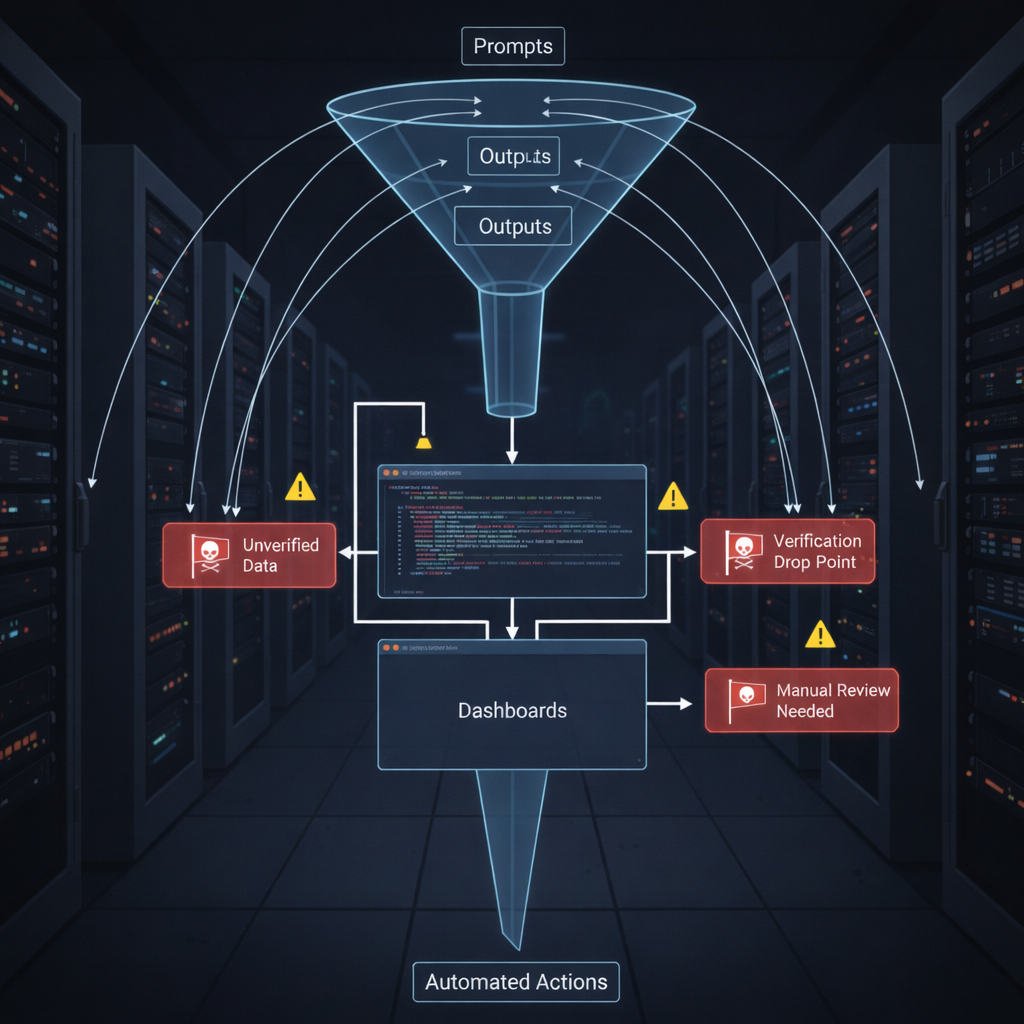

Signals Without Owners

Most AI debates focus on model quality. That’s a distraction.

The real issue is signals without owners [1].

Traditional systems had friction:

- Reports had named authors

- Dashboards had accountable teams

- Decisions could be questioned and traced

AI systems remove friction:

- 10× more signals

- Near-zero cost to generate

- No clear ownership

Confidence quietly becomes the verification mechanism - until downstream systems act on it [1].

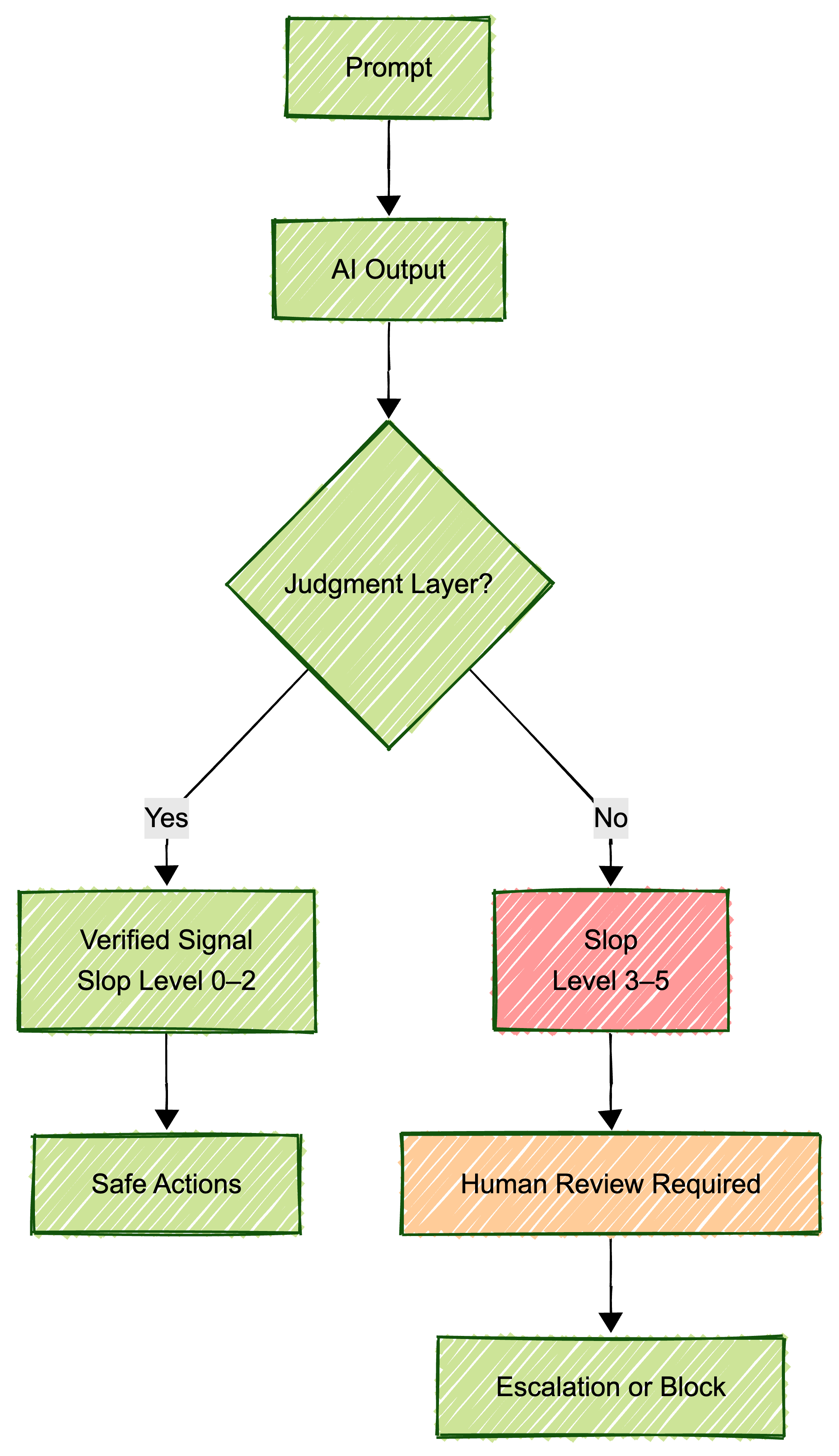

The Slop Scale Framework

To make this visible, apply a Slop Scale - a simple governance model for AI-generated signals [1].

| Level | Description | Required Action |

|---|---|---|

| 0 – Anchored | Evidence-linked, traceable | Safe for decisions |

| 1 – Draft | Exploratory thinking | Brainstorm only |

| 2 – Compressed | Nuance reduced | Light review |

| 3 – Confidence-Heavy | Polished, thin grounding | Flag for escalation |

| 4 – Action-Risky | Triggers real decisions | Named owner required |

| 5 – Slop | Authority without accountability | Block or redirect |

Practical rule: Review a small sample of Level-3+ outputs weekly. Track where slop clusters [1].

What Slop Looks Like in Real Enterprises

Slop doesn’t explode. It drifts.

Example:

- Customer email summarized by AI

- Summary misreads tone as financial risk

- Dashboard escalates severity

- Legal reviews a non-issue

Multiply this by hundreds per quarter and you get:

- Alert fatigue

- Signal distrust

- Quiet operational drag [1][10]

This pattern shows up repeatedly in enterprise RAG and agent workflows when no one owns the source signal [1].

From Sloperator to Signal Engineer

A signal engineer doesn’t reject AI. They govern it.

Typical pattern:

- AI generates a summary

- Engineer tags it as Level 2

- Flags missing nuance

- Routes it with “human review required”

- Owns the final decision

Slower than blind trust. Faster than cleaning up downstream damage.

Most Sloperators Aren’t Lazy

This matters.

Most sloperators are:

- Under speed pressure

- Rewarded for output volume

- Using tools designed to sound authoritative

This is a system and incentive problem, not a character flaw [1].

Three questions fix more than any policy:

- Who owns this signal?

- What verifies it?

- What happens if it’s wrong?

If the answer is “nobody,” slop is inevitable.

Bottom Line

AI systems don’t fail because they generate bad outputs. They fail because no one owns the signals they generate [1].

Better models won’t fix that. Better governance will.

The future belongs to engineers who own, verify, and trace signals - not those who generate the most confident output.

Sources

[1] Kerson.ai - Slop, Sloperators, and the Problem of Monitoring Signals at Scale

[2] LinkedIn - Origin of the “Sloperator” term

[3] PC Gamer - Linus Torvalds on AI slop

[4] The Register - Kernel documentation debate

[5] Slashdot - Torvalds shuts down AI slop arguments

[10] ERP Software Blog - Enterprise AI slop risks

Views expressed are my own and do not represent my employer. External links open in a new tab and are not my responsibility.

Discussion

Have thoughts or questions? Join the discussion on GitHub. View all discussions